Loading...

Written by Gareth Simono, Founder and CEO of Agentik {OS}. Full-stack developer and AI architect with years of experience shipping production applications across SaaS, mobile, and enterprise platforms. Gareth orchestrates 267 specialized AI agents to deliver production software 10x faster than traditional development teams.

Business & StrategyJanuary 12, 202621 min read

ROI of AI Adoption: Real Numbers, No Hype

Gareth Simono

Founder & CEO, Agentik{OS}

The AI ROI debate is over. Companies adopted, time passed, data is in. Here is what the numbers show, including where AI delivers and where it does not.

The era of AI ROI hypotheticals is over.

For two years, the conversation was theoretical. AI could save you X. AI might improve Y. Productivity gains of Z are possible. Everyone citing pilot programs and early experiments. The actual data was thin.

That era has ended. Companies have deployed AI at real scale. Enough time has passed to measure actual outcomes. The data is in, and it is more interesting than the hype suggested, for two reasons.

First, the real numbers in high-fit use cases are better than most predictions. Not marginally better. Dramatically better. Second, in low-fit use cases, AI barely moves the needle and sometimes makes things worse. Most prediction articles glossed over the second part. Both are useful to know before you commit budget and organizational capital.

I have spent two years gathering actual outcome data from companies that adopted AI at meaningful scale. What follows is what I found.

Software Development: The Three to Five Times Number

Forget the ten-times or hundred-times productivity claims. The consistent, measured number across companies that publish honest data: three to five times developer productivity improvement.

What that means concretely: a developer who previously shipped one substantial feature per sprint now ships three to five. Time from initial commit to production-ready code decreases by 60-75% for typical feature work. Pull request volume triples.

But the improvement is not uniform. This is the nuance that gets lost in the headline numbers.

| Task Type | Productivity Multiplier | Notes |

|---|---|---|

| Writing new code from specs | 5-8x | Largest improvement |

| Refactoring existing code | 3-4x | High if good context provided |

| Writing tests | 4-6x | Consistent improvement |

| Debugging new issues | 1.5-2x | Hard part is understanding, not writing |

| Complex system architecture | 1.2-1.5x | Generates options, human still decides |

| Production incident response | 2-3x | Faster log analysis and hypothesis generation |

Debugging complex production issues is the area where developers expected AI to help most and found the least uplift. The hard part of debugging is understanding the system well enough to form a hypothesis. AI helps with the investigation and hypothesis-testing once you have a lead. Finding the first lead in a novel system is still primarily a human task.

The bug rate reduction is the surprising winner. Teams using AI-assisted code review report 40-60% fewer bugs reaching production. Not because the AI catches everything. Because it catches the categories of bugs that humans miss when tired or rushing: obvious logic errors, missing null checks, SQL injection vectors, off-by-one errors in loops. The boring bugs. The ones you feel embarrassed about afterward.

This reduction in bugs reaching production compounds in ways that are hard to measure but clearly felt. Less time in incident response. Less technical debt accumulating from rushed fixes. Engineers spending more time building and less time firefighting.

Time-to-market improvement is the real multiplier. When development cycles compress from two weeks to three or four days, you do not just ship faster. You iterate faster. You run more experiments. You learn faster. The compounding effect of faster iteration cycles is worth more than the raw productivity number suggests.

The 3-5x productivity improvement at the individual level becomes a 5-10x competitive advantage at the organizational level when it enables faster iteration cycles.

Content and Marketing: Volume Is the Story

Marketing teams see the most dramatic volume improvements. Four to eight times content output is typical. I have seen cases of ten times output or more in content-heavy organizations.

One content marketer directing AI agents can produce more written content than a five-person team could manually. Blog articles, social content, email sequences, ad copy, landing page variants, SEO content, product descriptions. All at scale, all consistent with brand guidelines, all at quality levels that perform within measurable range of human-produced content.

The quality question gets complicated. Here is what the data shows:

For engagement metrics (click rates, time on page, social engagement), AI-generated content guided by human strategy performs within 5-15% of human-written content. The gap varies by content type. Short, high-volume content (social posts, email subject lines, meta descriptions) shows smaller quality gaps. Long-form thought leadership shows larger quality gaps.

For conversion metrics, the gap depends heavily on how carefully the AI is directed. AI content with thoughtful human strategic direction converts at 85-95% of human-written conversion rates. AI content with minimal direction converts at 60-75%.

Cost per piece of content drops 70-90%. This is not a marginal improvement. This is a structural change in the economics of content marketing. Strategies that were previously cost-prohibitive become viable.

Long-tail keyword targeting, which requires producing hundreds of specific articles that each serve small audiences, is now economically feasible for mid-market companies. Hyper-personalized email sequences that vary by industry, company size, and use case are now standard practice, not enterprise-only luxuries.

The marketing function that deployed AI intelligently is not just doing the same marketing cheaper. It is doing marketing that was previously impossible.

Customer Support: The Most Mature Data Set

Customer support is the function with the most mature AI adoption data because it has been deploying AI assistants for longer than most functions.

Routine inquiry automation rates of 50-70% are now standard for mid-market SaaS companies. Not for simple FAQ lookups. For multi-turn conversations that require retrieving account information, processing requests, and confirming actions. Real work that previously consumed human agent time.

Response time improvement is the headline. Average response time for routine inquiries: from 4-8 hours to under 5 minutes. For Tier 1 issues handled fully autonomously: response time measured in seconds.

Customer satisfaction (CSAT) shows a nuanced pattern that most case studies omit:

| Issue Type | Before AI | After AI | Change |

|---|---|---|---|

| Simple inquiries (AI-handled) | 3.8/5 | 4.3/5 | +0.5 |

| Complex issues (human-handled) | 4.1/5 | 4.6/5 | +0.5 |

| Escalated issues (AI starts, human finishes) | 4.0/5 | 3.7/5 | -0.3 |

Simple inquiries improve because they are resolved faster. Complex issues improve because human agents now have more time and mental energy for them. Escalated issues decline slightly because the handoff experience needs more design attention.

The economic impact:

Before: 10-person support team, 300 tickets/day, $800K annual fully-loaded cost

After: 4-person support team, 300 tickets/day (50% higher complexity), $320K annual cost

Savings: $480K/year

Quality: CSAT improves on most issue types

The 4 remaining people are senior specialists, not junior generalists. Their jobs are more interesting. Their compensation is higher. The organization gets better outcomes at 40% of the original cost.

Finance and Legal: High Value, Longer Adoption Curve

Finance and legal show excellent ROI when measured, but adoption has been slower due to regulatory and liability concerns.

Financial reporting and analysis automation shows 70-85% time reduction for standard report production. A financial analyst who previously spent 60% of their time on data compilation and formatting now spends that time on actual analysis and insight generation. Output quality, measured by the insight quality and accuracy of commentary, improves because the analyst is doing the work they are actually good at.

Legal document review is the standout use case. Initial contract review, checking for standard clause presence and flagging non-standard terms: AI handles this at 80% of the accuracy of a junior associate, at roughly 2% of the cost, and in minutes rather than hours. The human attorney reviews AI findings and makes judgment calls. Total review time: down 70-80%.

Important caveat: legal AI deployment requires careful validation in each jurisdiction and practice area. The general pattern holds. The specific implementation requires specialized expertise. Rushing legal AI deployment without that expertise creates liability.

Where AI Barely Moves the Needle

Honesty requires this section. Not everything shows impressive returns.

Strategic planning. AI generates scenario analyses and strategic options efficiently. The actual strategic decision, which involves judgment about organizational capabilities, political dynamics, and competitive positioning that AI does not have good information about, still requires human expertise. Productivity improvement: 1.3-1.5x at best.

Enterprise sales. AI assists with research, personalization, and proposal drafting. The deal itself still closes or does not based on human relationship quality. AI can make mediocre salespeople slightly better. It does not make average salespeople into great ones.

Original creative direction. Brand identity, campaign concepts, novel creative approaches. AI produces variations on existing patterns efficiently. It does not reliably produce genuinely novel creative directions. This is an area where human creativity is still meaningfully differentiated.

Highly regulated decision-making. Medical diagnoses, legal judgments, regulatory decisions. AI can assist with analysis, but accountability requirements mean humans remain in the decision loop in ways that limit efficiency gains.

These are not failures of AI technology. They are areas where the task genuinely requires something AI does not currently provide well. Knowing this prevents wasted investment.

The Compound Effect: Why Starting Early Matters

The single most important insight from the ROI data is the compound effect of AI adoption.

First deployment: good ROI. Team learns. Second deployment: better ROI. Patterns reused. Fifth deployment: excellent ROI. Organizational muscle fully developed.

Companies 18 months into AI adoption report 2-3x better ROI on new deployments compared to their first effort. The knowledge of what works, the tooling, the governance processes, the quality evaluation skills: all of these carry over to each subsequent deployment.

This means the ROI calculation for AI adoption is not just about individual deployments. It is about the compound value of organizational AI capability that builds over time.

A company that starts today will be better at their fifth AI deployment than a company that starts in 12 months will be at their first. The organizational learning advantage compounds.

Replacing teams with AI is not one project. It is a capability that gets cheaper and more effective with each iteration. The sooner you start building that capability, the better every future deployment performs.

FAQ

Q: What is the ROI of adopting AI for software development?

ROI is typically 300-500% in the first year: 3-5x faster delivery, 40-70% lower costs, 50% fewer production bugs, and 3-5x more output per developer. The ROI compounds as teams become proficient with AI workflows.

Q: How quickly do businesses see returns from AI adoption?

Measurable returns appear within 4-8 weeks. Month one is setup and learning. By month two, velocity increases. By month three, cost savings become apparent. Full ROI materializes by month six when compounding effects become significant.

Q: What are the costs of NOT adopting AI for development in 2026?

The cost is competitive disadvantage: competitors ship 3-5x faster, operate with 50-70% lower costs, and achieve higher code quality. Companies without AI workflows struggle to recruit top developers and compete on delivery speed.

Sources

- Stanford HAI AI Index Report 2025

- MIT Sloan: Measuring AI Impact on Productivity

- Deloitte State of AI in the Enterprise 2025

- McKinsey Global Institute: The Economic Potential of Generative AI

Further Reading

Gareth SimonoAuthor

Full-stack developer and AI architect with years of experience shipping production applications across SaaS, mobile, and enterprise. Gareth built Agentik {OS} to prove that one person with the right AI system can outperform an entire traditional development team. He has personally architected and shipped 7+ production applications using AI-first workflows.

Related Articles

Business & Strategy22 min read

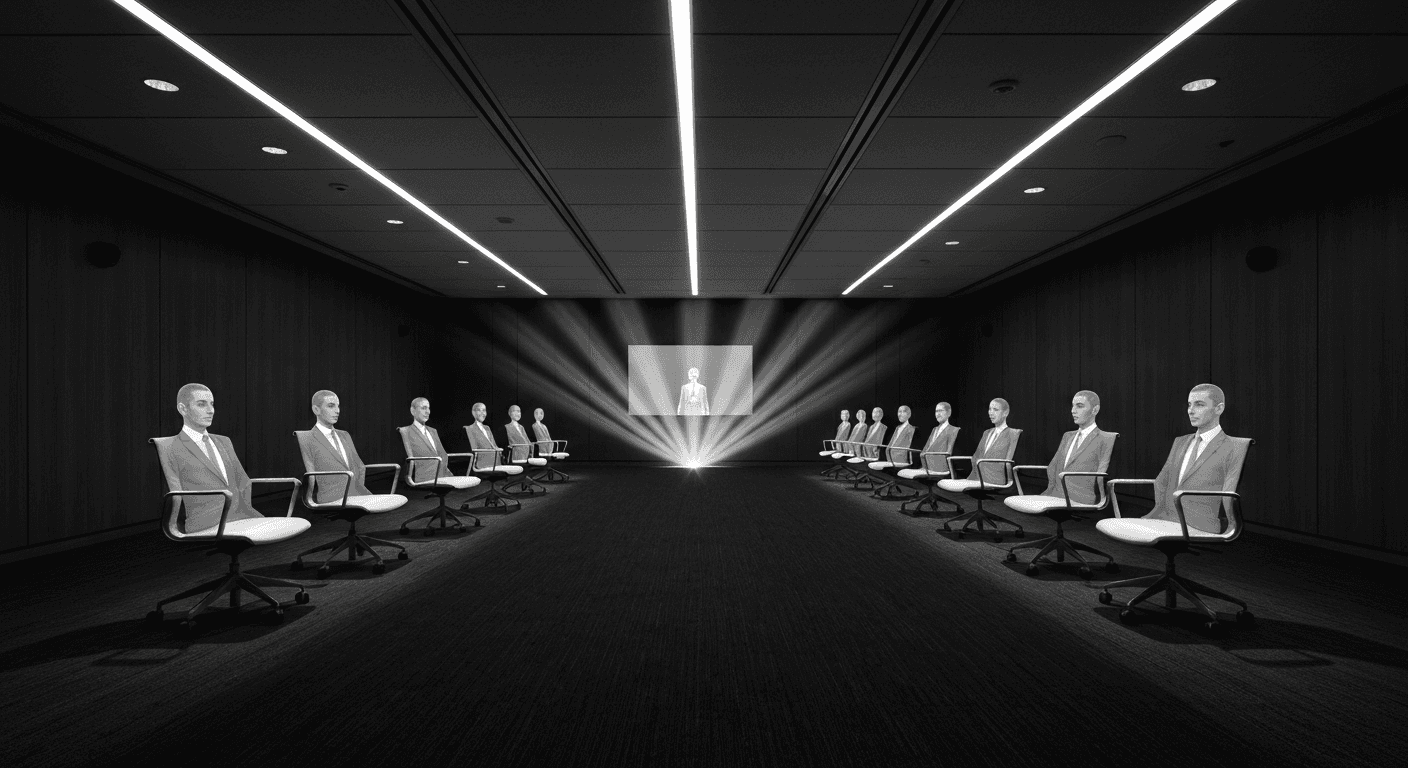

Replacing Teams with AI: The Real Playbook

The best results come not from layoffs and chatbots but from rethinking what organizations should look like if designed from scratch today.

Jan 9, 2026Read

Business & Strategy21 min read

AI Consulting: The New Gold Rush Playbook

Businesses know they need AI but have no idea how to implement it. That gap is where fortunes are made. Build the shovel business before the rush peaks.

Jan 14, 2026Read

Business & Strategy20 min read

AI-First Business Models: The Hidden Playbook

There is a large gap between bolting AI onto a business and building one around it. AI-first companies achieve software margins on service delivery.

Jan 6, 2026Read

Want to Implement This?

Stop reading about AI and start building with it. Book a free discovery call and see how AI agents can accelerate your business.