Loading...

Written by Gareth Simono, Founder and CEO of Agentik {OS}. Full-stack developer and AI architect with years of experience shipping production applications across SaaS, mobile, and enterprise platforms. Gareth orchestrates 267 specialized AI agents to deliver production software 10x faster than traditional development teams.

Business & StrategyJanuary 9, 202622 min read

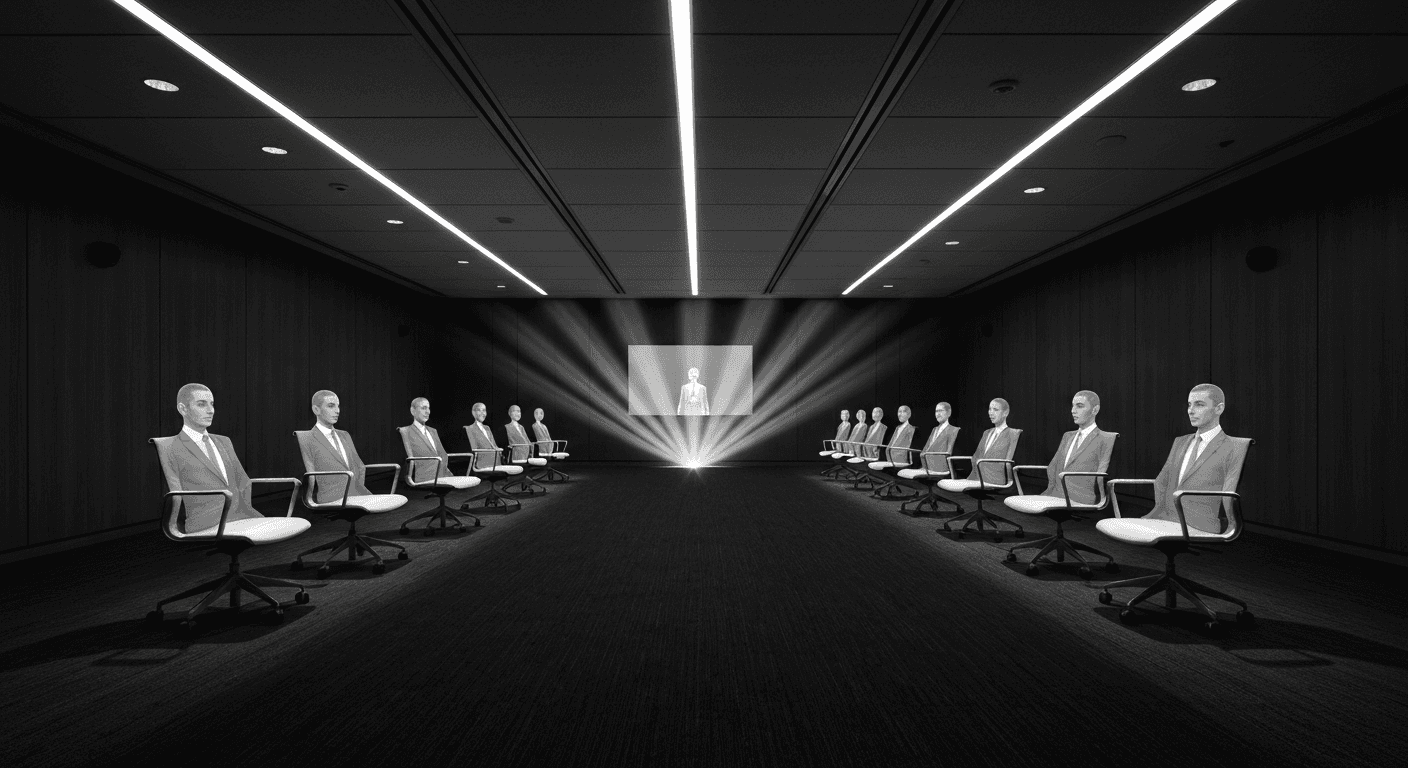

Replacing Teams with AI: The Real Playbook

Gareth Simono

Founder & CEO, Agentik{OS}

The best results come not from layoffs and chatbots but from rethinking what organizations should look like if designed from scratch today.

"Replacing teams with AI" sounds more ruthless than it is.

The phrase conjures images of mass layoffs and broken careers. The reality, at least in the organizations doing this well, is something different. It is about asking a genuinely hard question: if you were building this organization from scratch today, with all current AI capabilities available, what would it look like?

The answer is almost never "exactly what we have now, but with some chatbots added." It is a different structure with different team sizes, different role definitions, and different economic fundamentals. Some of those differences are uncomfortable. Most of them, honestly, make the organization better for everyone still in it.

The playbook matters because it is easy to do this wrong in ways that are both ineffective and destructive. I am going to give you the version that works.

Start With the Boring Work, Not the Exciting Work

Every organization has work that brilliant people hate doing. Report generation. Data entry. Content formatting. Responding to frequently asked questions. Code review for style consistency. Scheduling coordination.

These tasks are where AI automation should start. Not because they are the highest-value opportunity, but because they are the lowest-risk proving ground.

Starting with boring, high-volume, pattern-following tasks has three strategic advantages that are easy to miss.

First, success is easy to measure. "We used to spend 12 hours per week generating these reports. Now it takes 45 minutes." That is a number anyone can evaluate. It builds organizational confidence in AI before you are asking people to trust AI with anything important.

Second, the people freed from boring work become advocates for expansion. I have never seen someone complain that AI took over their most tedious responsibilities. Without exception, they want to know what else it can do.

Third, the failure modes are low-stakes. A mediocre AI-generated status report is less bad than a mediocre AI-generated customer proposal. You build the organizational muscle for evaluating and improving AI outputs before the stakes are high.

The organizations that start with "exciting" AI applications, creative strategy, executive decision support, complex relationship management, consistently struggle. The work is too nuanced for early-stage AI deployment, the first failures erode trust, and the program loses momentum before it delivers real value.

Start boring. Build trust. Expand.

Replace Functions, Not People (The Distinction That Matters)

This is the most important conceptual distinction in this entire playbook.

Replacing a person: Sarah in marketing is gone. The AI does her job now. Result: fear, resentment, and organizational resistance. Often, silent sabotage of the AI tools.

Replacing a function: The content production function, which previously required three people, is now handled by one person directing AI agents. The three people who previously filled that function are now doing higher-leverage work elsewhere in the organization.

The function is automated. The people are elevated. The output is better. The cost is lower.

This works because "content writer" is actually a bundle of functions. Some of those functions require human judgment: understanding what content will resonate with specific audiences, recognizing when a draft has the wrong tone, knowing when to break the rules. Other functions do not require human judgment: generating first drafts, researching background information, formatting and editing for consistency.

AI handles the second category. The person focuses exclusively on the first. They are now doing substantially more interesting and higher-value work, and the organization gets dramatically more output.

Before:

3 content writers producing 15 articles/month

Each writer: ~40% writing/editing, ~30% research, ~20% formatting/admin, ~10% strategy

After:

1 content strategist directing AI agents

Content strategist: ~70% strategy/quality review, ~20% AI direction, ~10% admin

Total output: 45+ articles/month at same or better quality

The person who stays becomes more valuable, not less. "Content strategist who directs AI production" is a more senior and better-compensated role than "content writer who also does formatting." Most people, when this is explained clearly, understand and accept it. Some even welcome it.

The ones who do not are often the ones whose value was concentrated in the execution layer rather than the judgment layer. This is a harder conversation. It is not a reason to avoid the transition.

The Four-Phase Deployment Sequence

After dozens of these transitions across different industries and team sizes, I have landed on a reliable sequence. Rushing any phase increases failure probability dramatically.

Phase 1: Shadow Mode (2-4 weeks)

Deploy AI agents alongside the existing team. The agents do the work. Every single output is reviewed by humans before it reaches any stakeholder. The human team does not reduce in size or effort. The AI team is parallel.

Purpose: validate quality, surface edge cases, and begin building institutional knowledge about where AI succeeds and where it needs help. Shadow mode also gives the existing team time to develop familiarity with AI outputs before they are responsible for directing them.

Success criteria: AI outputs passing quality review at >85% without major corrections.

Phase 2: Supervised Autonomy (4-8 weeks)

AI handles routine cases independently. Humans review a random sample (10-20% of volume) and handle all edge cases and exceptions. The human role shifts from doing the work to auditing the work.

This phase is where you discover the edge cases your shadow mode did not surface. Build explicit exception handling for each one. "When the AI encounters X, it should do Y" is a real policy that needs to exist before Phase 3.

Success criteria: Audited samples maintaining quality standards. Edge case library comprehensive enough that exceptions are handled gracefully.

Phase 3: Full Autonomy for Routine Work

AI handles 70-80% of volume without human review. Humans focus on complex cases (usually 10-20% of volume), quarterly quality audits, and strategic direction. The team size reduces.

This is where the economic gains materialize. It is also where the most mistakes happen if Phase 2 was rushed. The exception cases that were not handled in Phase 2 become visible failures in Phase 3. Run Phase 2 longer than you think necessary.

Phase 4: Systematic Expansion

Apply the same pattern to adjacent functions. The person who managed the Phase 1-3 transition for content production is now the expert who leads the transition for email marketing. And then for social media. And then for SEO.

Each subsequent transition is faster than the previous one because organizational muscle has developed. The institutional knowledge of how to evaluate AI quality, build exception handlers, and manage the transition is built. What took four months the first time takes six weeks the fifth time.

Specific Functions and What to Expect

The ROI varies significantly by function. Here is what I have measured across multiple engagements.

Content production. 4-6x output increase. 60-80% cost reduction. Quality maintained or improved on objective metrics (engagement, conversion). Timeline to Phase 3: 6-10 weeks. Risk: tone and brand voice consistency requires ongoing human attention.

Customer support (Tier 1). 50-70% of ticket volume handled autonomously within 90 days. Response time: hours to minutes. Customer satisfaction improves on simple issues (faster resolution), slightly declines on complex issues (agents route to humans but add a step). Timeline to Phase 3: 8-12 weeks. Risk: edge cases are often emotionally charged and require careful exception handling.

Code review (style and standards). Near-immediate automation of style, security anti-pattern, and test coverage checks. Developer time on these checks drops 80%. Timeline to Phase 3: 2-3 weeks. Risk: almost none for well-defined standards. Significant for judgment-heavy architectural review.

Financial reporting and analysis. Data compilation and standard report generation: 90% automated. Insight generation and commentary: 60-70% first-draft automated. Timeline to Phase 3: 4-6 weeks. Risk: numbers need human validation before distribution.

Sales development and outreach. Research and personalization: 80% automated. Volume can 3-5x. Response rates: mixed results. Quality of research improves, but over-reliance on AI outreach can feel impersonal if not carefully tuned. Timeline to Phase 3: 6-8 weeks.

The Change Management Problem

The hardest part of this transition is not technical. It is organizational.

People are afraid. That is legitimate. AI automation does eliminate some jobs. Pretending it does not is dishonest and it erodes trust faster than any other mistake you can make.

The communication approach that works:

Be honest about what is changing. Which functions will be automated. What the team will look like afterward. Do not let people find out through rumors.

Be clear about who is affected and how. Who stays in an elevated role. Who is moving to a different team. Who is leaving and with what support.

Involve the team in building the AI systems. The people who currently do the work have the deepest knowledge of edge cases, quality standards, and what good looks like. Their knowledge is essential for building effective AI systems. Involving them in the build is both practically valuable and emotionally important.

Show the elevated work, not just the automated work. Most people would rather think strategically about content and direct AI production than spend 30% of their time on formatting and admin. Show them what the elevated role looks like before the transition happens.

Organizations that communicate poorly and make people feel like passive subjects of the transition get resistance that slows everything down. Organizations that communicate well and involve people actively get allies who want the AI to succeed.

The Governance Infrastructure You Will Need

AI-automated functions need governance infrastructure that manual functions do not require. Build this before you need it.

Quality monitoring. Ongoing sampling and scoring of AI outputs. Alerts when quality drops below threshold. Regular human audits.

Exception protocols. Clear routing rules for cases the AI cannot handle. Someone who is responsible for every exception category.

Incident response. What happens when an AI makes a visible mistake? Who is responsible? How is it corrected? How is the AI system updated to prevent recurrence?

Performance baselines. What did quality look like before AI automation? You need this to measure whether quality has maintained, improved, or degraded over time.

Cost tracking. API costs, oversight time, and system maintenance costs. These are usually much lower than the labor they replace, but they need to be tracked explicitly.

The human-in-the-loop patterns required for this governance infrastructure are not optional extras. They are the difference between an AI-powered function that is trusted and one that quietly fails for months before anyone notices.

What the Numbers Look Like

Real numbers from engagements I have been involved with, with identifying information removed.

A marketing team of three people costing $210K in salary (roughly $300K all-in with benefits and overhead) producing 15 articles per month, 30 social posts, and 8 email campaigns. After transition: one content strategist costing $95K directing AI agents. Total AI infrastructure cost: under $10K per year. Output: 50 articles per month, 90 social posts, 24 email campaigns. All-in cost: $105K. Previous cost: $300K. Output: 3x higher. Quality maintained on all engagement metrics.

A customer support team of five handling 300 tickets per day. Average handle time: 12 minutes. After transition: two senior support specialists plus AI agents. AI handles 65% of tickets in under 2 minutes. Humans handle 35% complex cases averaging 18 minutes. Customer satisfaction on AI-handled tickets: slightly higher than before (faster resolution). Customer satisfaction on human-handled tickets: higher (specialists have more time). Total team cost: down 55%.

These are not outlier results. The ROI is real and documented. The specific numbers by function and industry give you the data to build a business case for your specific situation.

FAQ

Q: How do you replace a development team with AI agents?

Follow a phased approach: AI-assisted code review and testing (weeks 1-4), AI feature development with human review (weeks 5-8), autonomous development with human architecture oversight (weeks 9+). Maintain quality gates (TypeScript strict mode, automated tests, build verification) while gradually increasing agent autonomy.

Q: What roles can AI agents replace on a development team?

AI agents effectively replace junior-to-mid developers for feature implementation, QA engineers for testing, DevOps for deployment, technical writers for documentation, and code reviewers for routine reviews. Roles remaining human: senior architecture, product management, UX design, and team leadership.

Q: What is the realistic timeline for transitioning to AI agents?

A realistic transition takes 3-6 months: Month 1 for setup and pilots, Months 2-3 for expanding AI to routine features with human review, Months 4-6 for full autonomous development with human oversight on architecture. Rushing causes quality issues.

Sources

- McKinsey: The State of AI 2025

- Harvard Business Review: AI and the Future of Work

- Anthropic Enterprise AI Deployment Guide

- MIT Sloan: Redesigning Work with AI

Further Reading

Gareth SimonoAuthor

Full-stack developer and AI architect with years of experience shipping production applications across SaaS, mobile, and enterprise. Gareth built Agentik {OS} to prove that one person with the right AI system can outperform an entire traditional development team. He has personally architected and shipped 7+ production applications using AI-first workflows.

Related Articles

Business & Strategy20 min read

AI-First Business Models: The Hidden Playbook

There is a large gap between bolting AI onto a business and building one around it. AI-first companies achieve software margins on service delivery.

Jan 6, 2026Read

Business & Strategy21 min read

ROI of AI Adoption: Real Numbers, No Hype

The AI ROI debate is over. Companies adopted, time passed, data is in. Here is what the numbers show, including where AI delivers and where it does not.

Jan 12, 2026Read

AI Agents20 min read

Human-in-the-Loop: Where to Put Humans in Agent Systems

Full autonomy is a myth for any system that matters. The question is where to position humans so they add value without becoming the bottleneck.

Feb 12, 2026Read

Want to Implement This?

Stop reading about AI and start building with it. Book a free discovery call and see how AI agents can accelerate your business.