Loading...

Written by Gareth Simono, Founder and CEO of Agentik {OS}. Full-stack developer and AI architect with years of experience shipping production applications across SaaS, mobile, and enterprise platforms. Gareth orchestrates 267 specialized AI agents to deliver production software 10x faster than traditional development teams.

Business & StrategyFebruary 11, 202619 min read

Managing Humans and AI Agents Together: The New Playbook

Gareth Simono

Founder & CEO, Agentik{OS}

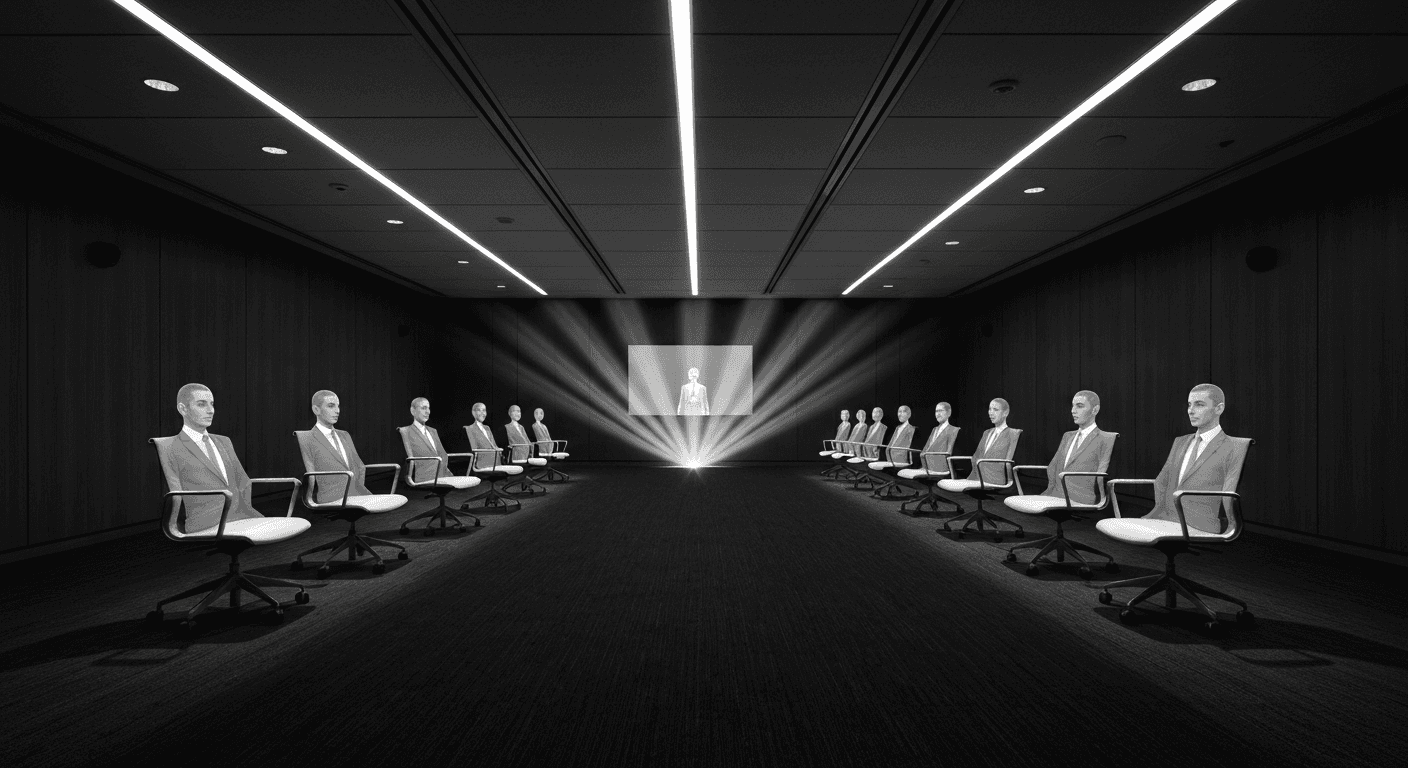

Your team now includes members who never sleep but never push back on bad decisions. Managing hybrid human-AI teams requires a completely new discipline.

Your team now includes members who never sleep, never take vacation, and never complain about workload. They also never question bad decisions, never push back on unrealistic timelines, and never tell you when your strategy is wrong.

Managing humans and AI agents together is not traditional management with a few bots added. It is a fundamentally different discipline that most managers are learning by making expensive mistakes.

I have spent the past year advising teams making this transition. The patterns are consistent. The mistakes are predictable. And the teams that figure it out first are building advantages that will take years to close.

The Hybrid Team Reality Most Leaders Miss

When I ask managers how many people are on their team, they typically give me the human headcount. They think of the AI agents as tools, not team members.

This framing is the first mistake.

An AI agent that handles customer support for 2,000 tickets per day is doing the work of 4-6 human agents. Treating it as a tool rather than a team member means you manage it like a software subscription rather than a high-output contributor. You do not review its work systematically. You do not give it feedback that improves its performance. You do not measure its output against the team's actual goals.

The teams winning with hybrid management are the ones who apply management discipline to their AI agents. They set performance expectations. They review output quality. They iterate on the systems that govern how agents operate. They track metrics.

The AI agents on your team will do exactly what you designed them to do, and exactly nothing more. The humans on your team will fill gaps, catch problems, and adapt to new situations. Design your hybrid team accordingly.

Redefining Team Structure

In a traditional team, the org chart reflects reporting relationships. In a hybrid team, the structure reflects capabilities.

Here is how I encourage leaders to map their hybrid teams:

| Work Type | Best Handler | Why |

|---|---|---|

| High-volume, structured tasks | AI agent | Consistency, speed, no fatigue |

| Novel problem-solving | Human | Judgment, creativity, adaptability |

| Relationship management | Human | Empathy, trust, context |

| Pattern-based analysis | AI agent | Data processing at scale |

| Strategic decisions | Human | Stakes, accountability, nuance |

| Quality control | Human + AI | AI catches mechanical errors, humans catch judgment errors |

Once you map your workflows against this matrix, the right structure becomes obvious. AI handles the structured, repeatable, high-volume work. Humans handle the judgment-intensive, relationship-driven, novel work.

Most teams that struggle with hybrid management have this backwards. They have humans doing the structured work and AI doing things that require judgment.

Managing AI Agents Like Senior Contributors

The most effective mindset shift I have seen: treat your AI agents like extremely capable senior contributors who have one critical weakness. They cannot adapt to implicit requirements. Everything must be explicit.

A human employee learns your company culture over time through observation and feedback. They absorb unwritten rules. They figure out which stakeholders are difficult and how to handle them. They develop a feel for when to push back and when to comply.

AI agents cannot do this through osmosis. Every preference, standard, and exception must be written down somewhere they can access it.

This is not a weakness in AI. It is a mirror held up to most organizations. If your standards and preferences are not written down, they exist only in the heads of specific individuals, and they walk out the door when those individuals leave.

Building the documentation that makes AI agents effective makes your organization more resilient overall.

The Briefing Document Practice

Every AI agent deployment I recommend starts with what I call a briefing document. Think of it as the onboarding manual for a highly capable employee who needs everything made explicit.

The briefing document covers:

Mission and scope. What is this agent responsible for? What is explicitly out of scope? Where does its authority end and human judgment begin?

Quality standards. What does good work look like? What are the examples of excellent output versus acceptable output versus unacceptable output?

Voice and tone. If the agent communicates externally, what personality should it project? What language should it avoid? What does your brand sound like?

Escalation protocols. What situations should the agent flag for human review rather than resolving autonomously? The more specific these protocols, the more confidently you can let the agent operate.

Performance metrics. How do you measure whether this agent is doing its job well? What numbers matter?

An agent operating with a thorough briefing document outperforms one operating with vague instructions by a factor of 3-5x in my experience. The output quality difference is dramatic.

Managing the Humans on Hybrid Teams

This is where most management literature has nothing to offer. The dynamics of human performance change significantly when AI agents are part of the team.

The Skill Evolution Problem

When AI handles the execution tasks, humans need to develop different skills. The junior employee who learned by doing routine work now finds that work automated. The senior employee who built their reputation on execution speed finds that advantage neutralized.

The skill evolution required:

Humans need to become better directors. Giving clear, specific instructions to AI agents is a skill. Some people are naturally good at this. Others require significant development. Invest in training that helps your team specify what they want with precision.

Humans need to become better evaluators. If AI is producing first drafts, humans need to evaluate them quickly and accurately. This requires sharp critical thinking and clear standards. People who could produce adequate work themselves often struggle to articulate what makes work adequate when reviewing AI output.

Humans need to move upmarket. The tasks that AI cannot do, relationship management, political navigation, genuine creative leadership, complex judgment calls, become more valuable. Help your team understand which of their skills sit in this category and invest in developing them.

When AI takes over what your team used to do, the question is not whether they need to change. It is whether you will help them change or leave them to figure it out alone.

Performance Management Gets Harder

Measuring human performance in hybrid teams requires new metrics.

Traditional metrics often measure activity: tasks completed, tickets closed, emails sent. In a hybrid team, AI handles most of those activities. Measuring humans by the same activity metrics as before becomes meaningless.

The metrics that matter for humans in hybrid teams:

Output quality, not volume. Did the work the human directed produce excellent results? Not how many things did they oversee, but how good was the work that came out the other side.

Agent performance. How well is the human managing their AI agents? Are the agents improving over time under their direction? Are they catching agent errors before they reach the customer?

Judgment accuracy. For decisions that required human escalation from AI, how good were the human calls? Did they escalate the right things? Did they make good decisions when asked?

Relationship outcomes. For the human-touch aspects of the role, what results are being produced? Customer retention, partner relationship quality, team morale metrics.

The Failure Modes Nobody Warns You About

I have seen the same failure patterns repeat across companies making the hybrid team transition. Each one is avoidable.

Over-Automation

The mistake: automate everything, keep humans only for the last mile.

The result: customers get an experience that feels robotically efficient but cold. Employees have no meaningful work and disengage. Problems that require judgment fall through the cracks because there is no human in a position to catch them.

I worked with a customer service operation that automated 90% of interactions. Support ticket volume dropped dramatically, but customer satisfaction dropped further. The 10% of cases that reached humans were the most complex, difficult cases, and the humans had no context because AI had handled everything before escalation.

The fix: design for human-AI collaboration, not human-AI handoff. The human should be present and involved throughout the customer journey for high-value interactions, even if AI is doing most of the work.

Under-Governance

The mistake: deploy AI agents without clear standards and review processes.

The result: the AI does things you would not have approved. It tells a customer something incorrect. It follows a rule that made sense for 95% of cases but was completely wrong for the customer in front of it. It creates work for humans to undo.

Every AI agent deployment needs a governance layer: regular output reviews, clear escalation protocols, feedback loops that improve the agent's performance, and a human who owns accountability for the agent's actions.

The Accountability Gap

When AI does something wrong, who is responsible?

In a hybrid team, this question matters more than most leaders think. If the AI agent misquotes a price to a customer, is it an IT problem? A management problem? A vendor problem?

The answer must be clear before something goes wrong. Designate explicit ownership for each AI agent. The owner is responsible for the agent's performance, its errors, and its improvement. When something goes wrong, the human owner is accountable, full stop.

This accountability structure creates incentives for good governance. When people own agent performance, they invest in making agents good.

Cross-Functional AI Integration

The most sophisticated hybrid teams I have seen do not think about AI on a function-by-function basis. They think about AI as a connective tissue across the entire organization.

Marketing generates leads. An AI agent qualifies them instantly and routes them to the right sales person with full context. The sales person has a briefing before the first call. Another AI handles follow-up cadences. Customer success has an AI that monitors account health and surfaces intervention signals.

The humans are making the high-value judgment calls. The AI is handling the connective work between those calls and ensuring nothing falls through the cracks.

The Coordination Layer

One pattern I keep seeing in well-run hybrid organizations: a designated role responsible for AI system design and coordination. Not an IT role. A business role.

This person understands the workflows deeply enough to design AI integrations that actually serve the business. They maintain the briefing documents. They review agent performance. They identify new automation opportunities. They manage the human-AI interface.

In smaller companies, this is a part-time responsibility for an existing team member. In companies past 20-30 people, it becomes a full-time role that pays for itself many times over.

Measuring Hybrid Team Performance

The metrics framework I recommend for hybrid teams:

Efficiency metrics. Time-to-completion for standard workflows. Cost per unit of output. Error rates. These tell you how well the AI components are performing.

Quality metrics. Customer satisfaction scores. Defect rates. Rework frequency. These tell you whether speed and cost optimization are coming at the expense of quality.

Human performance metrics. Output quality per human, judgment accuracy, agent management effectiveness. These tell you how well your humans are operating in the hybrid environment.

System health metrics. Agent escalation rates, out-of-scope request rates, override frequency. These tell you whether your AI systems are calibrated correctly for your actual workflows.

Review all four categories together. An improvement in efficiency metrics accompanied by a decline in quality metrics is not success. It is a failure mode that looks like success until it is not.

The Culture Question

Here is the part most management playbooks skip: hybrid teams require a culture shift, not just a process shift.

In a culture that values looking busy and logging hours, AI integration creates anxiety. If AI does the work faster, does the human still have value? Am I going to get replaced?

In a culture that values outcomes and judgment, AI integration creates opportunity. I can take on more complex work. I can move faster. I can deliver more value.

The difference is not which tools you deploy. It is how you lead the conversation about them.

I have seen the same AI integration succeed brilliantly in one team and fail miserably in another at the same company. The technology was identical. The leadership was different. One leader framed it as capability expansion. The other let it feel like workforce reduction.

The hybrid team future is not just about systems. It is about leadership.

FAQ

Q: How do you manage a team of humans and AI agents?

Managing hybrid teams requires clear role definitions (what humans decide vs what agents execute), standardized communication protocols (how humans review agent work), quality gates (automated checks plus human review at key points), and transparent workflows (everyone can see what agents are doing and why).

Q: What management practices work for human-AI teams?

Effective practices include daily stand-ups that review agent output quality, clear escalation paths from agents to humans, documentation-driven workflows (agents follow written specs), regular calibration of agent autonomy levels, and metrics that track both human and agent performance.

Q: How do you prevent AI agents from reducing team morale?

Prevent morale issues by positioning AI as a tool that eliminates tedious work (not replaces people), celebrating how AI frees humans for more interesting tasks, involving the team in AI workflow design, and ensuring humans retain meaningful decision-making authority.

Sources

- The Future of Work: Humans and AI in the Workplace (MIT Sloan Management Review)

- Managing AI Teams at Scale (Harvard Business Review)

- State of AI in the Enterprise (Deloitte Insights)

- Human-AI Collaboration Patterns (Stanford HAI)

Further Reading

Gareth SimonoAuthor

Full-stack developer and AI architect with years of experience shipping production applications across SaaS, mobile, and enterprise. Gareth built Agentik {OS} to prove that one person with the right AI system can outperform an entire traditional development team. He has personally architected and shipped 7+ production applications using AI-first workflows.

Related Articles

Business & Strategy22 min read

Replacing Teams with AI: The Real Playbook

The best results come not from layoffs and chatbots but from rethinking what organizations should look like if designed from scratch today.

Jan 9, 2026Read

Business & Strategy20 min read

AI-First Business Models: The Hidden Playbook

There is a large gap between bolting AI onto a business and building one around it. AI-first companies achieve software margins on service delivery.

Jan 6, 2026Read

AI Agents21 min read

Production Agent Teams: From Demo to Reality

The demo worked perfectly. Three weeks into production, they pulled it. The gap between prototype and production is always the same set of problems.

Jan 29, 2026Read

Want to Implement This?

Stop reading about AI and start building with it. Book a free discovery call and see how AI agents can accelerate your business.