Loading...

Written by Gareth Simono, Founder and CEO of Agentik {OS}. Full-stack developer and AI architect with years of experience shipping production applications across SaaS, mobile, and enterprise platforms. Gareth orchestrates 267 specialized AI agents to deliver production software 10x faster than traditional development teams.

AI DevelopmentJanuary 12, 202616 min read

AI Pair Programming: Beyond Copilot to Full Autonomy

Gareth Simono

Founder & CEO, Agentik{OS}

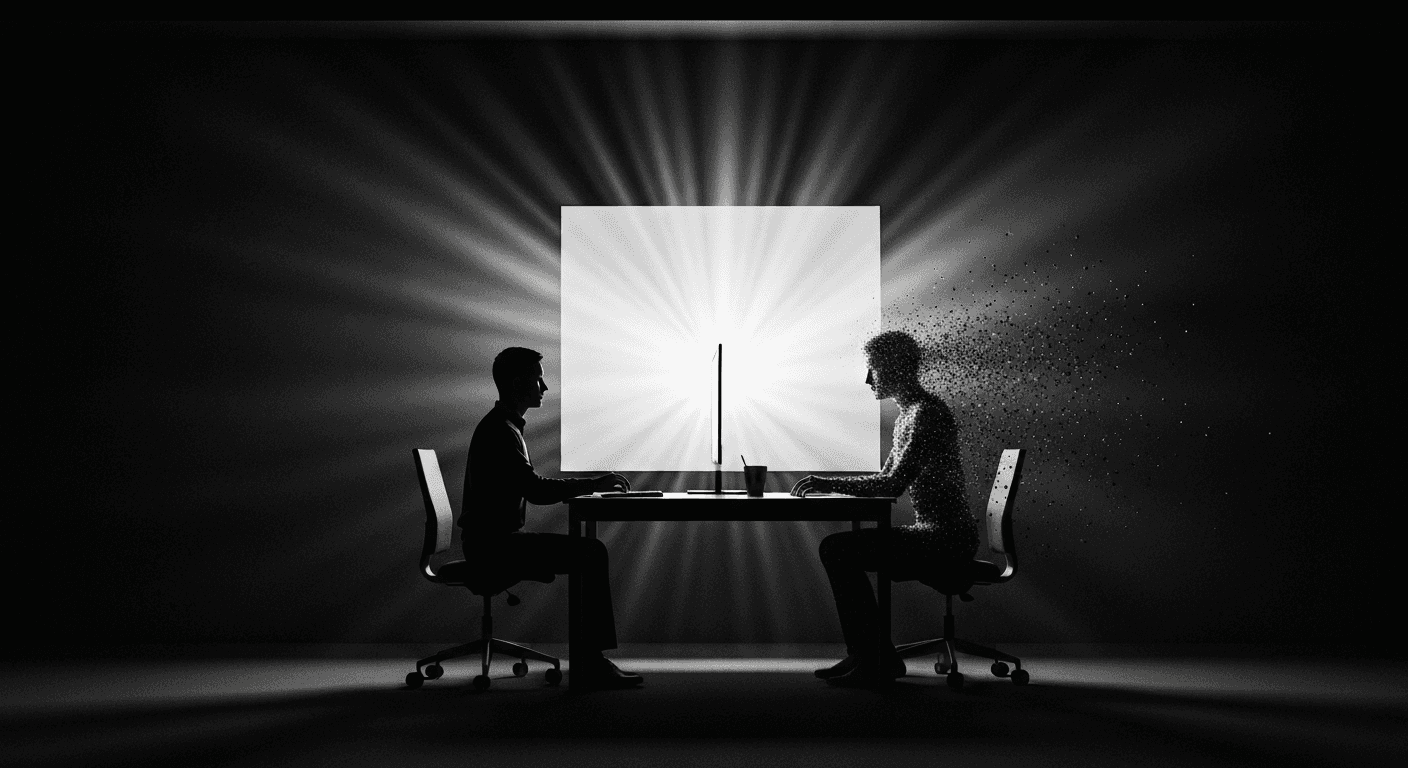

The jump from code completion to real AI pair programming. How modern agents collaborate on complex tasks, not just finish your sentences.

Copilot was training wheels. Useful training wheels. The kind that helped you go faster without falling over. But training wheels nonetheless.

I remember the first time GitHub Copilot suggested a complete function body and I thought "okay, this changes things." That instinct was correct. It just wasn't correct in the way I imagined. Copilot didn't change things by completing my code. It changed things by proving that AI could understand programming context well enough to be genuinely useful. It proved the concept.

What came next proved it could do so much more.

The Context Gap Nobody Talks About

Code completion and AI pair programming differ along one dimension that sounds technical but has enormous practical consequences: scope.

Code completion operates at the line or function level. The model sees the current file, maybe a few imports, and predicts what you probably want to type next. Fast, convenient, shallow. Good at finishing thoughts.

AI pair programming operates at the project level. It understands how components interact. It knows where technical debt is accumulating. It can tell you that the feature you're about to implement will conflict with something in a module you haven't opened in three weeks. It can notice that you're solving this problem the same way it was solved incorrectly in two other places.

This is the difference between a tool that finishes your sentences and a collaborator who understands your codebase.

The implications are significant. Code completion makes individual developers faster at execution. AI pair programming changes what problems a developer can successfully tackle, at what level of complexity, with what confidence.

An experienced developer with AI pair programming can maintain architectural coherence across a codebase that would previously have required a team. The AI holds the full picture while the human makes decisions.

What a Real Session Looks Like

Forget the autocomplete mental model. AI pair programming is a conversation that spans an entire feature.

I describe what I want to build at an appropriate level of abstraction. "We need a notification system. Users should be able to receive notifications through email, in-app messages, and push notifications. They should be able to configure preferences per-channel, and per notification type within each channel."

The AI proposes an implementation plan. Database schema for notification preferences with appropriate normalization. Service layer for dispatching to each channel. API endpoints for reading and updating preferences. UI components for the settings page. Webhook handler for receiving events. The plan is complete and coherent before any code is written.

We refine the plan together. "The email integration should use Resend, not SendGrid. Preferences should default to all-on, not all-off. The in-app notifications should use Convex for real-time updates rather than polling." The AI adjusts the plan and explains the implications of each change.

Then the AI builds. Schema first, then service layer, then API routes, then UI components, then tests. I review as it goes. I catch architectural decisions that need adjustment before they've propagated through the codebase. I spot places where the implementation diverges from the product vision. I make judgment calls about trade-offs.

This collaborative loop is faster than either pure manual development or fully autonomous generation. The human provides judgment and product vision. The AI provides execution speed and systematic thoroughness.

The Conversation Patterns That Work

Good AI pair programming sessions have recognizable patterns. Learning to run these sessions well is a skill, and it is learnable.

Start with intent, not implementation. "I want users to be able to search their notification history" is better than "write a search function for the notifications table." Give the AI latitude to propose an approach. Then evaluate it.

Ask for options, not answers. "What are two different ways we could implement real-time updates here, and what are the trade-offs?" This surfaces information you might not have. The AI often knows patterns you don't.

Challenge decisions you don't understand. "Why did you use a compound index here rather than two separate indexes?" The AI explains its reasoning. Sometimes the reasoning is good and you learn something. Sometimes the reasoning is wrong and you catch a mistake early.

Iterate visibly. When you want something changed, say why. "This error message won't make sense to users who don't know what a webhook is. Can you make it more human?" The AI applies the feedback and adjusts its internal model of your preferences.

Use context generously. Share relevant background the AI might not have. "We're targeting non-technical users for this feature, so the terminology should be plain English throughout." Context shapes everything.

typescript

// Example of the collaborative specification pattern

// Developer describes intent:

// "Users should be able to filter their notification history

// by date range and notification type. We want URL-synced filters

// for shareability. Performance matters - max 1000 notifications shown."

// Agent proposes and implements:

type NotificationFilters = {

startDate?: Date;

endDate?: Date;

types?: NotificationType[];

page: number;

};

function useNotificationFilters() {

const [searchParams, setSearchParams] = useSearchParams();

const filters: NotificationFilters = useMemo(() => ({

startDate: searchParams.get('from')

? new Date(searchParams.get('from')!)

: undefined,

endDate: searchParams.get('to')

? new Date(searchParams.get('to')!)

: undefined,

types: searchParams.getAll('type') as NotificationType[],

page: parseInt(searchParams.get('page') ?? '1', 10),

}), [searchParams]);

const setFilters = useCallback((updates: Partial<NotificationFilters>) => {

const next = new URLSearchParams(searchParams);

// ... update logic

setSearchParams(next, { replace: true });

}, [searchParams, setSearchParams]);

return { filters, setFilters };

}The developer described intent. The agent produced a URL-synced filter implementation with proper types, memoization, and clean state management. The developer reviews it, understands it, and either approves or refines. That is the loop.

The Senior Developer Multiplier

Productivity gains from AI pair programming scale with developer experience. This surprised me initially. I expected the benefit to be roughly equal across skill levels.

A junior developer benefits significantly. They get better code suggestions, catch more errors, and move faster than they would alone. But they spend significant time evaluating whether the AI's suggestions are correct, and they sometimes can't tell.

A senior developer with a clear mental model of what they want can direct the AI like a conductor directing an orchestra. They evaluate output at a glance. They spot architectural problems immediately. They make instantaneous judgments about whether a proposed approach fits the broader system.

The result: senior developers see 5-8x productivity gains where juniors see 2-3x. This is not because the AI is smarter for senior developers. It is because senior developers can use the AI's speed without sacrificing quality control.

The implication for team structure is significant. A team of three senior developers with AI pair programming can often produce what a team of fifteen produced before. The bottleneck shifts from execution capacity to judgment and decision-making capacity.

The Skill Shift Is Real and Happening Fast

The skills that differentiate developers are shifting. Not eliminating. Shifting.

Three years ago, the most valuable developer was the one who could implement complex features quickly and accurately in frameworks that had steep learning curves. Framework expertise was a genuine competitive advantage.

Today, frameworks are not a differentiator. An AI agent with a good specification can implement correctly in Next.js, Django, Rails, or Spring Boot. What matters now is something different.

The ability to think at the right level of abstraction. Too specific, and you micromanage the AI toward worse solutions. Too vague, and the AI makes assumptions that don't fit your context. Finding the right level for each collaboration is a skill.

The ability to evaluate output quickly and accurately. A developer who can review agent-generated code at 300 lines per minute with high catch rate for real problems is far more productive than one who reads slowly but catches every stylistic preference.

Systems thinking. How does this feature interact with everything else? What failure modes exist? What does this look like at 100x current scale? The AI handles implementation details. The human needs to see the full system.

User empathy. Technical correctness is table stakes. Does this feature solve the user's actual problem? Is the interaction clear? Does the error message help? These questions require human judgment.

Investing in these skills now compounds over time in a way that pure execution speed never could. The developers who will be most valuable in five years are already investing in them.

The Setup That Produces Great Sessions

AI pair programming quality depends heavily on the working environment.

A thorough CLAUDE.md is the foundation. The AI needs to know your project's patterns, conventions, and decisions to pair effectively. Without this context, every session starts from scratch.

Clean, well-organized code gives the AI accurate context. It reads your existing code to understand patterns. If your existing code is inconsistent, it learns inconsistent patterns.

Fast tests enable fast feedback loops. The AI's ability to iterate depends on how quickly it can verify that changes are correct. Test suites that run in thirty seconds enable much faster iteration than ones that take fifteen minutes.

A specification habit changes everything. Take five minutes before each session to write a precise description of what you want to build. The return on that five-minute investment is enormous.

Start with medium-complexity features. Describe them precisely. Review everything the AI produces. Correct patterns early. Within three sessions, you will feel the shift in how you work.

For the deeper collaboration patterns at the team level, see multi-agent orchestration. For the fully autonomous variant, see autonomous coding agents.

FAQ

Q: What is AI pair programming?

AI pair programming is a collaborative development approach where an AI agent works alongside a human developer in real time, going far beyond simple code completion. Unlike autocomplete tools like GitHub Copilot that predict the next token, AI pair programming involves the agent understanding project context, suggesting architectural approaches, implementing features, and engaging in back-and-forth dialogue about design decisions.

Q: How is AI pair programming different from GitHub Copilot?

GitHub Copilot predicts your next line of code based on context. AI pair programming with tools like Claude Code operates at a fundamentally different level — it understands your entire codebase, makes architectural suggestions, implements complete features, writes tests, and engages in design discussions. The gap is like the difference between a spell checker and a writing partner.

Q: When should you use AI pair programming vs autonomous coding agents?

Use pair programming mode when the task requires creative judgment, ambiguous requirements, or institutional knowledge — situations where real-time human-AI dialogue produces better results. Use autonomous mode for well-specified tasks with clear requirements like boilerplate, testing, and standard feature implementation. Most productive workflows combine both modes.

Q: What skills do developers need for effective AI pair programming?

The key skills are specification writing (precise, unambiguous feature descriptions), architectural thinking (knowing what to build and why), quality judgment (recognizing when AI output feels wrong despite passing tests), and feedback calibration (correcting AI patterns early so they replicate correctly).

Sources

- Developer Productivity with AI Tools (GitHub Research)

- The Future of AI-Assisted Coding (a16z)

- Pair Programming with LLMs: What Works (Anthropic)

Further Reading

Gareth SimonoAuthor

Full-stack developer and AI architect with years of experience shipping production applications across SaaS, mobile, and enterprise. Gareth built Agentik {OS} to prove that one person with the right AI system can outperform an entire traditional development team. He has personally architected and shipped 7+ production applications using AI-first workflows.

Related Articles

AI Development22 min read

Autonomous Coding Agents: The Real 2026 Guide

Everything about autonomous coding agents: how they work, when to trust them, when not to, and how to build reliable systems around them.

Jan 10, 2026Read

AI Development17 min read

AI Code Review: Catching What Humans Miss

AI code review catches race conditions, security holes, and subtle bugs that experienced human reviewers miss. Here's how to set it up right.

Jan 14, 2026Read

AI Development20 min read

Claude Code in Production: The Real Playbook

Battle-tested patterns for using Claude Code in production apps. Project setup, CLAUDE.md config, testing, and deployment automation that actually works.

Jan 7, 2026Read

Want to Implement This?

Stop reading about AI and start building with it. Book a free discovery call and see how AI agents can accelerate your business.