Loading...

Written by Gareth Simono, Founder and CEO of Agentik {OS}. Full-stack developer and AI architect with years of experience shipping production applications across SaaS, mobile, and enterprise platforms. Gareth orchestrates 267 specialized AI agents to deliver production software 10x faster than traditional development teams.

Future of AIJanuary 9, 202617 min read

AI Predictions 2026: What Happened vs Expected

Gareth Simono

Founder & CEO, Agentik{OS}

Everyone predicted AGI. Nobody predicted the economics. Here's what the prediction crowd got right, wrong, and completely backwards about 2026.

Remember when people said 2026 would be the year of AGI?

It was not. Obviously.

But something arguably more interesting happened. AI stopped being a technology story and became an economics story. The shift was subtle at first, then sudden. And the people who had been confidently predicting the wrong things were also the last ones to notice.

I have been tracking AI predictions since 2023. Recording what experts said, then checking back when the deadline arrived. The hit rate is surprisingly consistent. Not in a good way. Pundits who were confidently wrong about 2024 were confidently wrong about 2025 and are confidently wrong about 2026 in predictable ways.

The people making accurate predictions share a characteristic: they think about economics first, capabilities second. The people making bad predictions do the opposite.

This is what actually happened in 2026, and why the gap between prediction and reality matters for how you build things now.

The Big Miss: AGI Remained a Philosophy Debate

The AGI prediction machine never sleeps. Every year, a new cluster of experts pins a number to the AGI arrival date. Every year, the date gets revised as the previous one passes without incident.

2026 was supposed to be different. GPT-5 rumors. New reasoning architectures. Scaling law extrapolations pointing at inflection points. The community worked itself into a genuine frenzy.

What actually happened: models got significantly better at specific tasks. Coding, reasoning, multi-step problem solving all improved substantially. But the defining characteristic of AGI, generalized intelligence that transfers seamlessly across arbitrary novel domains without training, remained elusive.

What improved was breadth and reliability, not novel reasoning. Models became more consistent. Better at staying on task. Less prone to the spectacular failures that used to make AI skeptics feel vindicated. That is meaningful progress. It is not AGI.

The AGI debate is philosophically interesting and practically irrelevant for anyone building products right now. Whether we hit some threshold labeled "AGI" this year or in fifteen years does not change what you should be building today.

The more interesting story is what happened in the space between "narrow AI" and "AGI" that everyone was too busy arguing about timelines to notice.

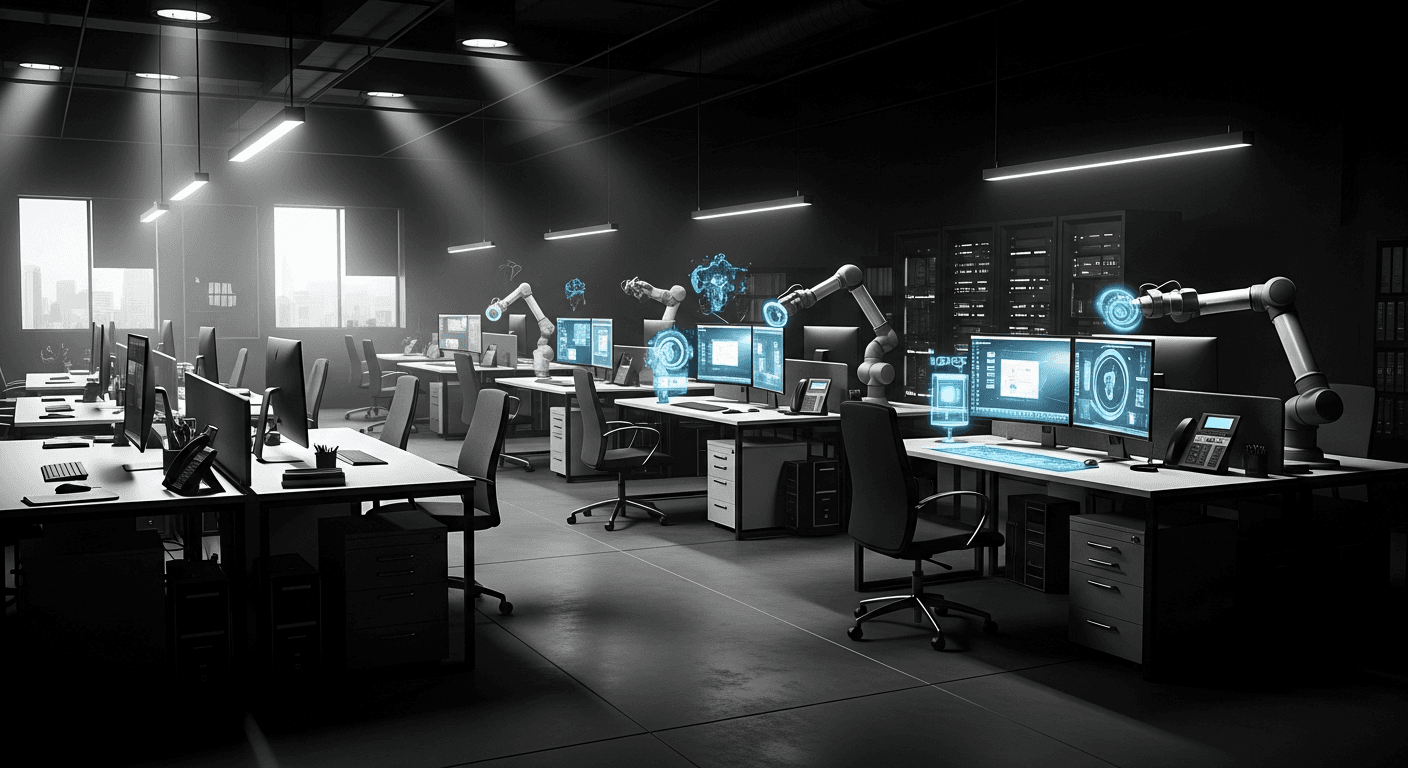

The Big Hit: Agents Became Standard Infrastructure

This one was almost too easy to call in hindsight. But the scale surprised even the optimists.

The majority of professional software developers now use AI coding assistants daily. Not occasionally. Not experimentally. Daily, as a core part of their workflow. Autonomous coding agents handle significant portions of routine development work. Write a database migration. Generate comprehensive test coverage. Scaffold a new feature from a description. Create API documentation.

These tasks increasingly go to agents rather than junior developers.

What predictions missed was the second-order effect. AI did not just speed up individual developers. It changed team structures entirely. Companies run leaner engineering teams producing more output than before. The ratio of shipping to headcount shifted dramatically.

A solo founder with a well-configured set of AI agents now produces output that previously required a team of five. Not theoretical output. Shipped, production-quality software with real users paying real money. I see this every week. Teams of one building products that look like twenty engineers built them.

The adjacent prediction that held up: enterprise adoption accelerated faster than consumer adoption. Large companies with dedicated budgets and clear ROI metrics moved faster than individuals experimenting on personal projects. The early adopter demographics got this backwards in early forecasting.

The Real Driver Nobody Called: Economics, Not Breakthroughs

This was the pivotal miss. The prediction everyone should have made but almost nobody did.

The prevailing narrative said adoption would accelerate when models got smarter. Better reasoning. Larger context windows. More reliable outputs. And yes, models did get better. That is not what triggered the adoption wave.

Costs collapsed. Inference prices dropped 70-80% year over year through 2025 and into 2026. A query that cost $0.10 in early 2025 costs $0.02 now. The same query that cost $1.00 in 2023 costs under a penny today.

This cost decline made business cases that were marginally viable suddenly obvious. AI-powered customer support became dramatically cheaper than human agents even accounting for quality gaps. AI-generated marketing content became 10x cheaper than freelancers even at reduced quality. The math stopped being debatable.

Reliability improved alongside cost reduction. Not a coincidence. Smaller, faster, cheaper models that run inference at 10x lower cost also tend to be more consistent and predictable. The wild hallucinations and spectacular failures that dominated AI discourse in 2023 became rarer.

Businesses that were waiting for certainty got it. Not because AI became perfect. Because it became reliably good enough at specific tasks, at prices that made the economics work.

| Year | GPT-4 class query cost | Adoption phase |

|---|---|---|

| 2023 | ~$0.10-0.30 | Early enthusiasts |

| 2024 | ~$0.05-0.10 | Early majority |

| 2025 | ~$0.01-0.05 | Late majority |

| 2026 | ~$0.002-0.01 | Ubiquitous infrastructure |

The lesson that should inform every future prediction: technology adoption is almost always an economics story disguised as a technology story. The capability was there in 2024. The economics arrived in 2025-2026. Adoption followed economics, not capability milestones.

The Prediction That Kept Failing: Mass Unemployment

Every year since 2022, a significant cluster of predictions has forecast AI-driven mass unemployment within 12-24 months. Every year, the labor market does not collapse.

2026 continued this pattern. And I think it will continue for longer than most people expect, not because AI is not transformative, but because economies absorb technology shocks more gradually than technology timelines suggest.

What actually happened is more nuanced than either the doom camp or the "nothing changes" camp predicted.

Jobs shifted. Roles involving routine information processing contracted. Roles requiring judgment, relationships, and novel problem-solving expanded or remained stable. The labor market adapted, messily and slowly. New roles emerged faster than old ones disappeared, but not the roles that were eliminated.

A data entry clerk cannot easily become a prompt engineer. The skills do not transfer. The adaptation requires time, retraining, and geographic mobility that many affected workers lack. This creates genuine hardship for real people even while aggregate employment statistics look healthy.

The more accurate framing: not mass unemployment but significant wage compression in knowledge work. The spread between mediocre and excellent narrowed because AI raised the floor of competence. A mediocre analyst with good AI tools produces output that five years ago required a genuinely skilled analyst. That changes compensation dynamics fundamentally.

The labor market impact of AI is real, significant, and concentrated in specific demographics and geographies. The aggregate employment statistics that dominate public discourse miss this entirely.

What the Optimists Got Right

Creative augmentation played out roughly as the optimists predicted, though faster.

Designers generate more concepts in a week than they used to in a month. Writers produce more drafts, explore more angles, experiment more. Musicians iterate through arrangements that would have taken weeks manually. The amplification effect on creative output is real and substantial.

Medical AI delivered on narrower promises than the big predictions suggested, but delivered reliably. Radiology assistance, drug discovery acceleration, clinical note summarization. Not general medical AI that replaces doctors. Specific tools that make specific parts of healthcare more efficient. Unglamorous. Valuable.

Code quality improved measurably. Not just developer speed. The bar for what constitutes "acceptable" code rose as AI-generated code improved. Junior developers now write code that looks like mid-level developers wrote it three years ago. This is good for software quality and complicated for junior developer hiring.

What the Pessimists Got Wrong

AI safety concerns were taken seriously and addressed more effectively than pessimists predicted.

The doom scenarios involving AI systems pursuing misaligned goals, systems rapidly self-improving beyond human control, systems being weaponized at civilization-threatening scale, none of these materialized in 2026. This is not evidence they cannot happen. It is evidence that the combination of researcher attention, corporate caution, and regulatory pressure created more friction than pessimists accounted for.

The open-source AI community proved more responsible than predicted. When Llama 3 and its successors became widely available, the predicted wave of weaponized AI did not arrive. Bad actors found AI marginally useful in ways that were consistent with them finding other marginally useful tools. The uplift to serious threats was less than predicted.

AI-generated content did not destroy epistemics at the scale feared. Misinformation spread more easily. Distinguishing AI-generated from human content became harder. But catastrophic epistemic collapse did not arrive. Humans proved more resistant to manipulation than the pessimists assumed.

The Trajectory: Integration, Not Revolution

The next five years will not look like science fiction. They will look like spreadsheets.

AI is becoming invisible infrastructure. Embedded in every SaaS tool, every business process, every consumer application. Not as a separate "AI feature" you opt into, but as an underlying capability making everything slightly smarter, slightly faster, slightly cheaper.

The companies benefiting most are not AI companies. They are traditional businesses that redesigned operations around AI capabilities. A logistics company using AI for route optimization. An insurance company using AI for claims processing. A school district using AI for personalized tutoring. Unglamorous. Transformative.

The implication for builders: stop building AI products. Start building products that happen to use AI. The distinction matters.

AI products compete on capability, which commoditizes rapidly. When you are selling "AI writing," you are competing with every other AI writing tool, and the differentiator is which underlying model you are using, which you do not control. Products that use AI compete on domain expertise, user experience, and distribution. Those advantages compound.

Build for the spreadsheet version of the future. It is more profitable than the science fiction version.

Making Better Predictions: A Framework

If you want to predict AI's impact on your industry or business, stop asking "how capable will AI be?" and start asking "what happens to unit economics when AI handles X?"

Take customer support. The question is not "will AI be good enough to handle support tickets?" AI has been good enough for many support tickets since 2023. The question is "at what cost per ticket does it become economically obvious to replace human support with AI?" When you frame it this way, you can actually calculate a threshold and watch for it.

Same framework applies to content creation, software development, legal research, financial analysis. Figure out the current human labor cost. Track AI capability per dollar. When the crossover point arrives, adoption accelerates quickly.

The most reliable leading indicator for AI adoption in any category is not model performance on benchmarks. It is cost per useful output compared to human labor cost for equivalent output.

The predictions that will prove most accurate about 2027 and 2028 are the ones being made by people doing this economic calculation, not by people doing capability extrapolations.

FAQ

Q: What AI predictions came true in 2026?

Key predictions that materialized: AI agents became mainstream development tools, one-person companies building products that previously required teams, AI handling 50-60% of routine coding, and enterprise AI adoption accelerating significantly. Predictions that did not materialize: AGI arrival, complete developer job displacement, and AI-generated content becoming indistinguishable from human content.

Q: What surprised the AI industry most in 2026?

The biggest surprises were how quickly AI agents became reliable for production software development, the emergence of one-person AI-powered companies generating significant revenue, the speed of enterprise adoption despite initial skepticism, and the shift in developer roles from coding to architecture faster than expected.

Q: What are the most important AI trends heading into 2027?

Key trends are multi-agent systems becoming production-standard, AI agents with persistent memory and learning, voice and multi-modal interfaces maturing, AI regulation taking effect globally, and the continued shift from AI-as-tool to AI-as-workforce across industries.

Sources

- State of AI Report 2025

- AI Index Report - Stanford HAI

- Epoch AI: Trends in Machine Learning

- Anthropic: Model Cards and Research

Further Reading

Gareth SimonoAuthor

Full-stack developer and AI architect with years of experience shipping production applications across SaaS, mobile, and enterprise. Gareth built Agentik {OS} to prove that one person with the right AI system can outperform an entire traditional development team. He has personally architected and shipped 7+ production applications using AI-first workflows.

Related Articles

Future of AI18 min read

Post-AGI Business: Building Companies That Survive

Nobody knows when AGI arrives. The timeline debate is interesting but practically useless. Here's what actually matters for building a resilient business.

Jan 15, 2026Read

Future of AI17 min read

AI Agents Are Replacing SaaS. Here's the Mechanism.

Why pay $49/month for a dashboard you must learn and operate when an agent just does the thing? SaaS sells tools. Agents sell outcomes.

Feb 10, 2026Read

Future of AI18 min read

AI and Jobs in 2026: What's Really Happening on the Ground

The AI-will-take-your-job narrative is lazy. Also wrong. Also not entirely wrong. Here's what we're actually seeing in the labor market, past the headlines.

Jan 23, 2026Read

Want to Implement This?

Stop reading about AI and start building with it. Book a free discovery call and see how AI agents can accelerate your business.